y

Your go-to fix for tech hype, fluff and comms disasters. Laugh at the cringe, avoid the chaos.

CHITTER CHATTER

Not Good News

Flatlining the Org Chart. Less Red Tape. More Burnout.

I used to think a flat org structure was good. And to a large degree, I still do. I’ve always been someone who likes autonomy; I don’t need to be micromanaged. I remember once working on a big project with highly capable, clever and creative people. It was a great team. No big egos fighting for control. Then a few executive seagulls decided to fly in and deposit their feedback, which was counter to what we had developed. In that context, former Netflix Chief Talent Officer Patty McCord’s comment that “top-down control can hinder innovation” rings true. As the old saying goes, “Too many cooks spoil the broth.”

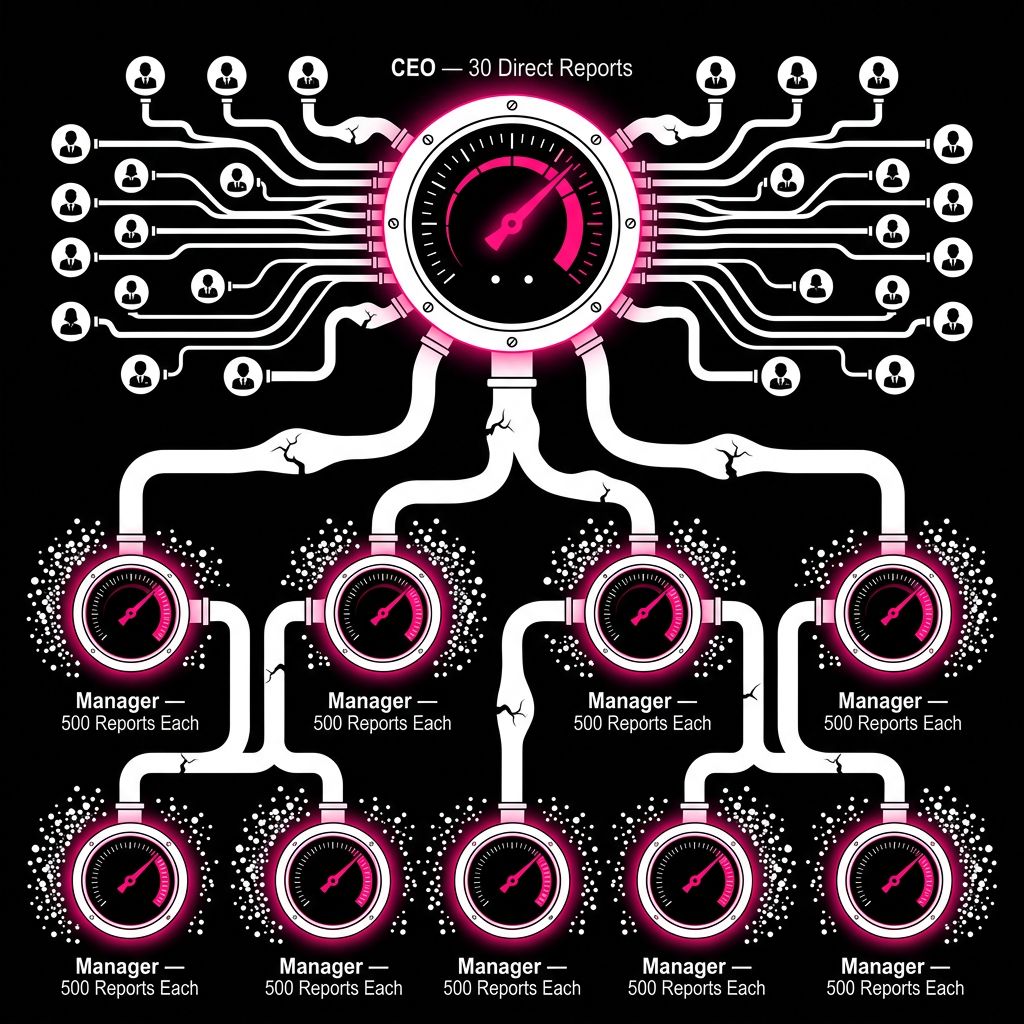

How flat do we go? Recently, Meta and Block have been rebranding managers (what’s left of them) as ‘org leads’ or ‘player-coaches’. But culling middle managers might not be such a good idea. The problem is that the few managers that exist end up overworked and burnt out. And in fairly large organisations, the leadership team (CEO, CFO, CIO, etc.) can end up with too many reports, leading to less strategic focus and more people admin focus. Humans are needy creatures. We seek affirmation, advice and direction. And we look to whoever is ‘in charge’ to do this for us.

Flat orgs: Freedom to work or more work to choke on?

While there’s a lot of hype right now about flat org structures due to AI, I think we’re ahead of ourselves. As I reported in previous editions of this newsletter, many tech layoffs are happening to address other business problems, not because AI can do the work of 50 employees. Which doesn’t mean this isn’t happening, also. I think we still need managers (or whatever trendy new title you bestow on them) in place. But they have to be carefully chosen because not everyone knows how to lead, encourage, and get the best out of people.

Tech Fails

Robotaxis: Choking Traffic & Breaking Bones

As someone who is slightly claustrophobic, my worst nightmare would be to be stuck in a lift or in a robotaxi. Recent outages in China and San Francisco don’t fill me with confidence, with fleets paralysing cities during software glitches. Beyond this is the safety issue and tech firms’ lack of accountability regarding it. For example, in January this year, US federal investigators opened a probe after a robotaxi struck a child in a school zone (luckily, she only suffered minor injuries), but still. If a human driver did this, they’d face possible charges and a suspended license. With AI, we just get a software patch. Still, you can’t pump the brakes on innovation. Human lives are a sacrifice the board is perfectly comfortable making.

Sam Altman: Public Offering Raises Issues of Moral Failure

OpenAI’s CEO is disliked and even hated by many people. A New Yorker investigation published this month portrays him as a pathological liar driven by aggressive commercial growth over AI safety. A board member of OpenAI was quoted as saying that Sam Altman is “unconstrained by truth” and has an “almost sociopathic lack of concern for the consequences that come from deceiving someone.” These claims have been around for a while, yet Sam Altman prevails despite them. This week, he was also the victim of an unsuccessful physical attack when a young man threw a Molotov cocktail outside his home and made threats outside OpenAI’s headquarters. Negative press and physical retaliation are just another indication that a large percentage of people fear AI and greatly distrust its prophets, especially Sam Altman.

Weird Tech

Brainless Human Clones

This one’s a bit dystopian, but apparently R3 founder, John Schloendorn, has been secretly “pitching a startling, medically graphic and ethically charged vision for what he’s called ‘brainless clones’ to serve the role of backup human bodies”, as reported by MIT Technology Review. “Imagine it like this: a baby version of yourself with only enough of a brain structure to be alive in case you ever need a new kidney or liver.” Apparently, this founder said, “In the future, one brainless clone could give birth to another.” Ugh. Mere hype or something more sinister?

A possible future: Your own soulless human clone

SUBTEXT

Tech Waffle Torture

The Waffle: “To unlock our full potential, we’re transitioning to a flat organisational structure, removing the friction of traditional hierarchy to empower every individual to move at the speed of thought.” — Tech CEO in a Town Hall

Translated: We’ve fired everyone whose job was to say ‘no’ to your bad ideas, so now you have 40 direct reports and no hope of a promotion. If you aren't being made redundant to 'streamline workflows,' you’re being rebranded. You’re not a 'Director' anymore; you’re now a Velocity Architect, Cultural Conduit, or a Player-Coach-Referee-Cleaner. 70% more drudgery, 20% more burnout.

Tech Ailments

Flat-Earth Dysmorphia [flat-urth dis-mawr-fee-uh]

A psychological corporate delusion where leaders believe that by deleting middle management, the laws of physics will bend and work will simply ‘flow.’ Common among CEOs who find hierarchies sub-optimal and want to feel like a scrappy startup again. Symptoms include eye twitches, nausea, and the certainty that you’re having a heart attack. Side effects: Decision-making paralysis, and ‘shadow hierarchies’ (where the loudest person wins). Cure: One week of the CEO personally approving every expense report and a return to the realisation that adults occasionally need a boss.

Tech Terms Explained

BullShitBench Test: A new AI benchmark that asks the question, can machines tell when something is, well, BS? You feed an AI nonsensical questions and see if it will push back, “or confidently plow ahead without spotting the BS.”

Testing AI nonsense. If only we had one for humans.

THE SHALLOW END

Posturing / Virtue Signalling

Responsible AI Washing: For three years, AI leaders have warned that advanced models could cause profound harm, require regulation, and even pose existential risk. At the same time, their companies have kept releasing stronger systems on a fast cadence, revising voluntary safety frameworks as they go, and drawing criticism that safety language can function as positioning as much as restraint. The criticism isn’t that AI firms never do safety work. It’s that safety is often presented like a moral brand, while commercial release pressure keeps setting the pace.

Pop Culture Meets Tech

The title should really be ‘Pop Culture Rejects Tech’, though not entirely true: it’s old tech that’s staged a comeback in recent years, which I find fascinating, with Gen Z embracing retro tech “that they would not get notifications on, like iPods, wired headphones and Polaroid cameras.” Welcome to my world when I was young. 😄 I’m liking this trend like the dumb phone uptake, as I’ve previously covered.

DR COMMS PRESCRIBES

Dear Dr Comms

My company sent me on an all-expenses-paid trip to Manila to help set up and train an offshore team. I smiled, documented, transferred knowledge, and generally behaved like a woman who believed she still had a future. Two weeks after I got back, they made me redundant. Apparently, my replacement onboarding was exemplary. I’m furious and humiliated. How do I get over being used so efficiently? Yours, Trained Then Terminated

Dear Terminated

That sucks - for you. Let’s consult some specialists:

🏙️ Town planner:

“You approved a road upgrade, then discovered it was a bypass around you. Terrible for morale. Excellent for traffic flow.”

🎸 Band roadie:

“You hauled the gear, set the lights, taped the cords, and then got told the tour bus was full. My advice? Never do a flawless soundcheck for people planning your silence.”

🐝 Beekeeper:

“You opened the hive, did the work, and got stung in the face by your own bees. It happens. The trick is not to stand there calling it collaboration while your head swells shut.”

Got a problem no sane Comms Doctor should touch? Email [email protected] and I’ll assemble a panel of deeply unqualified professionals to sort you out.

Cartoon of the Week

Office Life Right Now

BIN THIS…

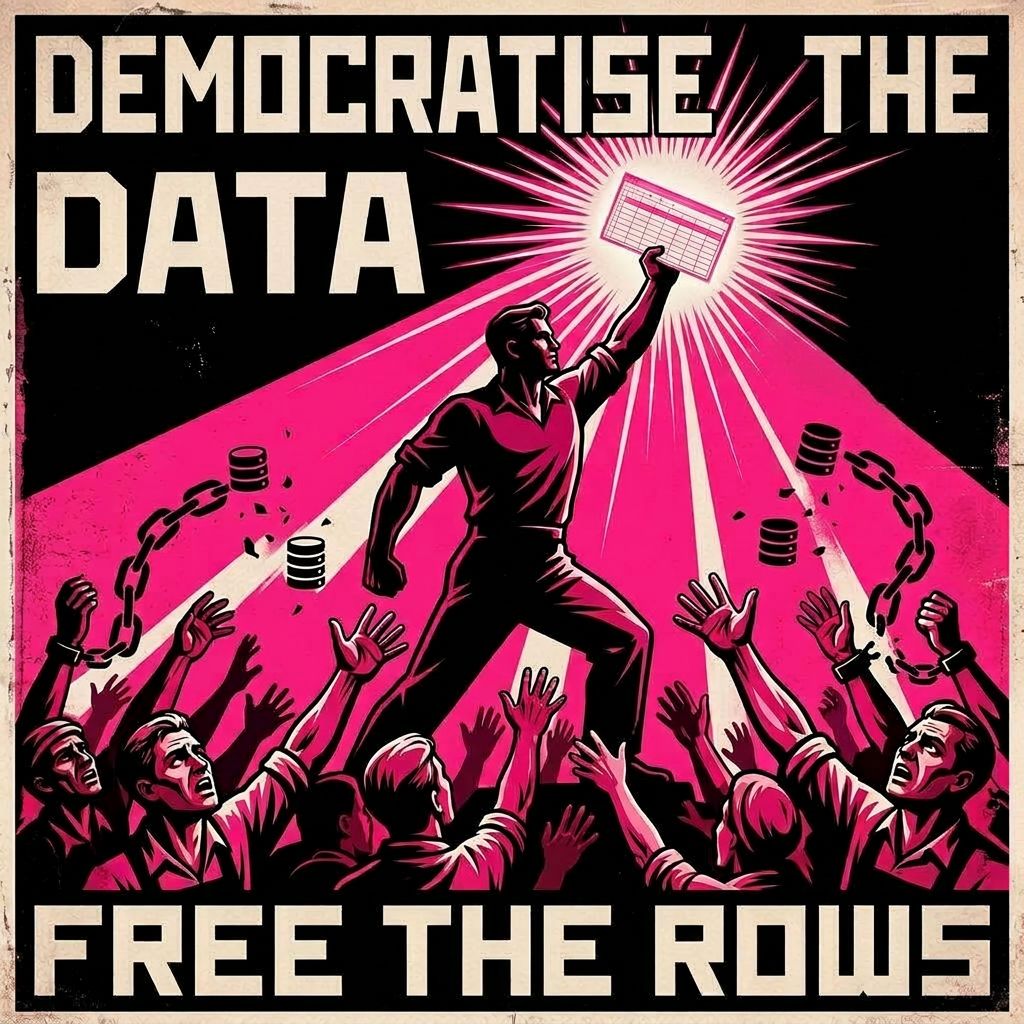

Democratising Data / Innovation

Corporate speak claiming to ‘democratise data’ or ‘democratise innovation’ is rarely about power to the people; it’s a moralising word used to hide an aggressive commercial expansion.

By framing a standard sales goal as a grassroots liberation movement, tech companies pretend they’re storming the Bastille when they’re actually just expanding their TAM (Total Addressable Market).

Usually, ‘democratising’ is just code for: "We made the UI slightly less confusing so we can justify charging 5,000 more people for a subscription."

It’s the ultimate linguistic sleight of hand, repackaging the act of selling a SaaS license as a noble blow against elitism.

It sucks big time because it co-opts the language of actual social progress to sell a dashboard.

Unless your software is literally toppling a dictatorship, stop calling it a democracy. Bin it.

We fight for the freedom to charge clients more!

Know someone who lives for this kind of nonsense? Forward this email to them and help me spread the dysfunction.

You can also subscribe to my other newsletter, Lead Different, for a serious take on strategic communications in B2B tech.